Most people understand deepfake technology through the lens of fun apps that allow users to prank friends by swapping or modifying faces in photos.

However, when such visual tools are used for faking identities and performing fraudulent crimes, that is where technology has been abused. Deepfakes are audio or video that is either wholly created or altered by AI or machine learning (ML) to convincingly misrepresent someone as doing or saying something that was not actually done or said.

The implications of bad actors using deepfake technology for everything from social engineering attacks to misinformation campaigns has put the security community on edge. The growth in deepfake sophisticated and use for criminal activity is stirring international concern.

In March this year, the Federal Investigation Bureau (FBI) released a report declaring that malicious actors will almost certainly leverage ‘synthetic content’ for cyber and foreign influence operations in the next 12–18 months.

I have since spoken with several CISOs of prominent global companies about the rise in deepfake technology during security incidents. These were some of their concerns:

- Highly effective phishing

While the basic premise of social engineering attacks remains unchanged, security teams are now noticing deepfakes being used for additional subterfuge in business communication compromise (BCC) attacks or as a component of phishing attempts. These attacks take advantage of remote-working environments to trick employees with a well-timed fake voicemail or voice message created to sound like it is from a familiar person.

In a VMware threat report, 32% of respondents had observed attackers using business communication platforms to facilitate lateral movement as part of their attacks. In fact, phishing campaigns via email or business communication platforms are particularly ripe for this kind of manipulation, as they leverage the implicit trust of employees and users when they are in their familiar virtual work environment. - Biometrics obfuscation

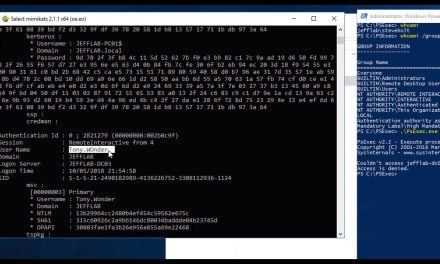

Multi-factor authentication is a cornerstone tactic of cyber vigilance, and biometrics have become a key factor due to its inherent uniqueness. With the proliferation of deepfake technology, a Pandora’s Box of identity authentication issues has been unlocked.

Deepfakes can now fool biometric authentication protocols and greatly increase the chance of successful compromise. This fast growing ‘synthetic identity’ fraud is expected to cause significant new roadblocks in organizations’ identity and access management strategies. - Dark Web

Just as ransomware has evolved into Ransomware-as-a-Service (RaaS) models, deepfake threat actors are doing the same: on the Dark Web as well as other social media platforms, threat actors now offer custom services and tutorials on bypassing security measures using visual and audio deepfake technologies. They are also exchanging deepfake tools and techniques with other threat actors, for the purpose of forming alliances to increase their success rate when targeting organizations.

CISOs must keep up

Today’s distributed workforces are reliant on video conference tools to hold meetings and simply connect with each other. This creates a wealth of audio and video data that can be fed to machine learning software to create compelling deepfake material.

Since nothing is off-limits to modern threat actors, cybercriminals are often first adopters of advanced technologies, as they hope to leverage unfamiliarity to gain penetration into security perimeters.

The prevalence of deepfake technology on the Dark Web and the potential for its use in future attacks should serve as a warning to all CISOs and security professionals that we are entering a new era of distrust and distortion at the hands of attackers.

Never trust, always verify—especially in the age of manipulated reality.